74% of Engineering Leaders Say AI Already Outperforms Humans on Basic Design Checks

74% of Engineering Leaders Say AI Already Outperforms Humans on Basic Design Checks

Key Takeaways

.svg)

Key Takeaways

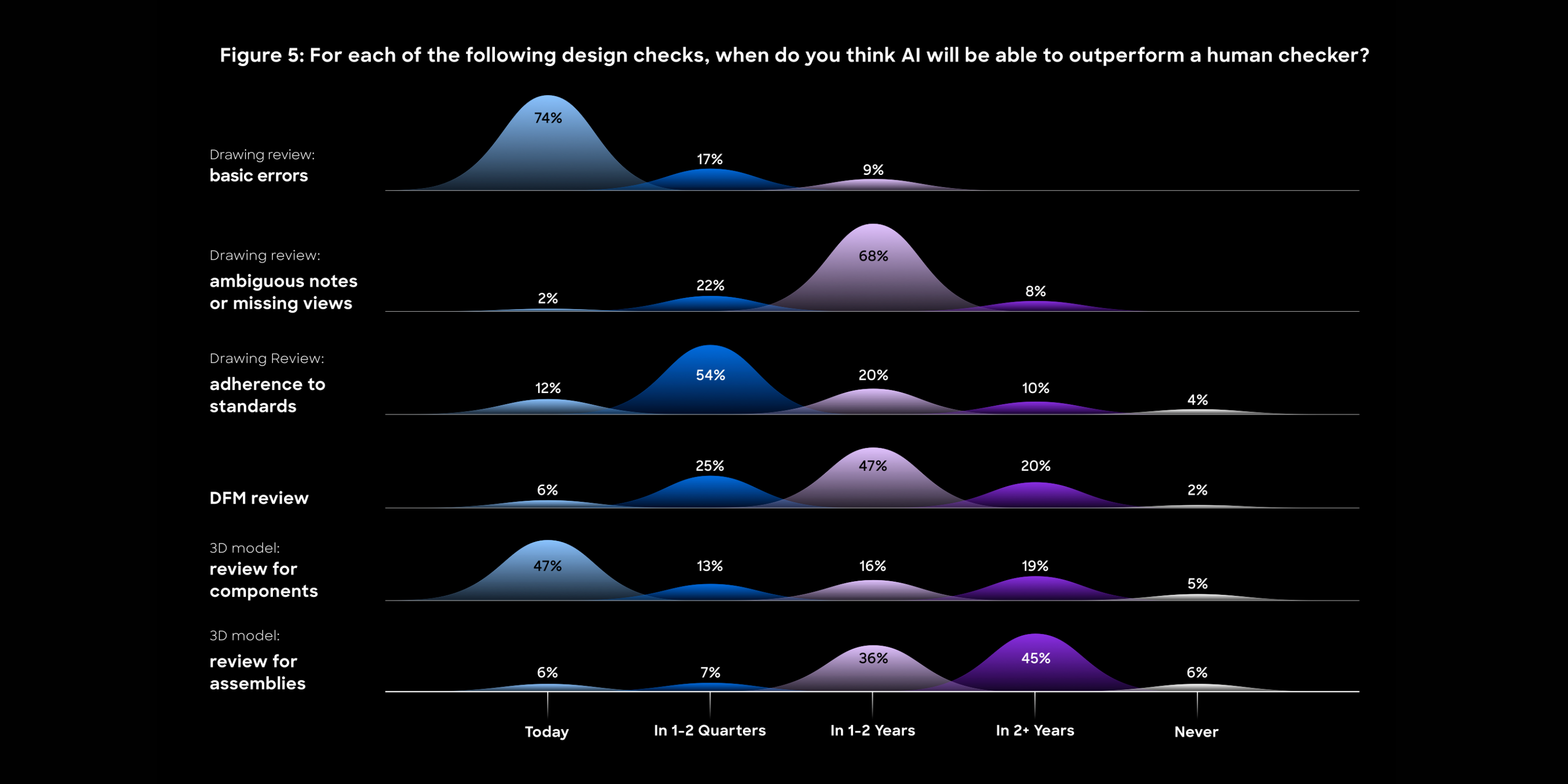

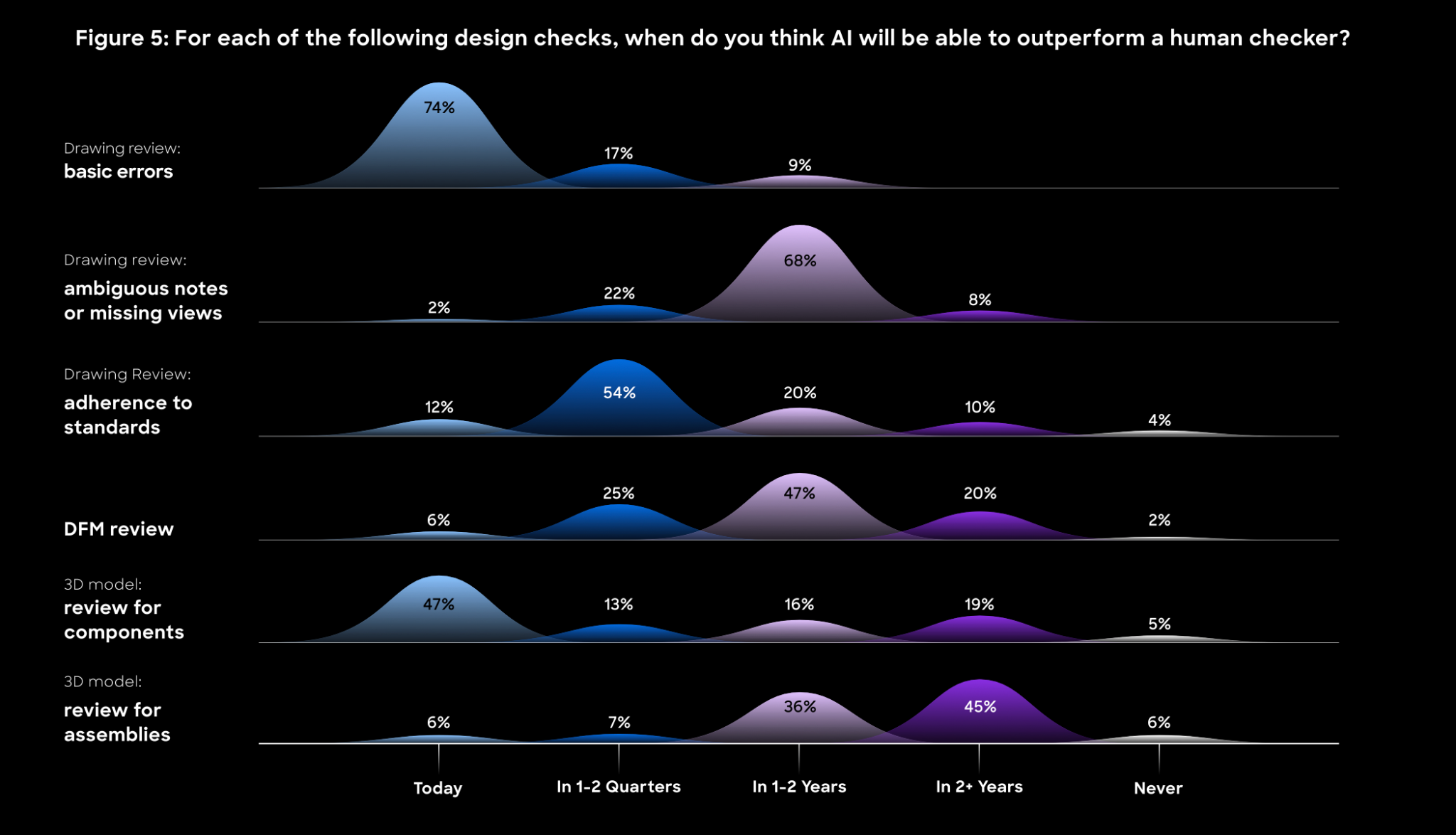

According to CoLab’s Near-Term Impact of AI on Engineering, a research survey of 250 engineering leaders across large manufacturing organizations, most engineering leaders already believe AI can outperform human checkers when identifying basic design issues.

The survey data makes clear:

- 74% of engineering leaders say AI can already outperform human checkers to identify basic design errors;

- Engineering leaders see AI adoption unfolding in stages: basic error checking now, ambiguous notes and standards adherence within one to two quarters, with DFM and assembly checks within one to two years; and

- Human expertise isn’t going away. AI automates repeatable checks so engineers can focus on the decisions that require more nuanced judgment and design intent.

That 74% figure described above may grow quickly. An additional 17% of engineering leaders expect AI to reach the same bar within the next quarter. AI capabilities have matured faster than most teams have moved to deploy them.

The Timeline Most Engineering Leaders Already See

CoLab’s survey didn’t just ask respondents whether AI could outperform humans during design review. We asked for which types of checks, and when.

For basic drawing errors, the majority say AI is already there. For ambiguous notes and missing views, 68% expect AI to reach parity within one to two quarters. For adherence to organizational standards, the most common response was one to two quarters out. For DFM reviews and 3D model assembly checks, most leaders expect parity within one to two years.

The pattern is consistent. Rules-based, high-volume checks are where AI performs very well today. It will take longer for confidence to build in automated checks that require contextual judgment about manufacturability, assembly interaction, or design intent. Undoubtedly, some of those processes will always require a human in the loop.

One finding worth noting: 47% of respondents say AI can already handle 3D model reviews for components. Rules-based tools have checked component geometry against defined criteria for years. LLMs are now layering contextual understanding on top, which explains why adoption at this tier has accelerated faster than most teams expected.

What This Means For Engineering Teams Today

Engineering teams running 35,000+ drawing reviews per year have a category of work where AI is already the more consistent performer. Running those checks manually is now a choice, not a necessity.

That doesn’t mean pulling engineers out of the review process entirely. It means redirecting their attention to the calls that truly need it, such as the cost-versus-tolerance tradeoff that no standard can resolve, or the design intent behind a feature that isn’t captured anywhere in the drawing. These are the issues that slip through even well-run design reviews.

The Gap Between Capability and Deployment

The sharper finding in the data isn’t that AI outperforms humans on basic checks. It’s that most teams haven’t restructured their design review process to reflect that fact.

The barriers are largely operational, involving data hygiene, change management, and integration with existing PLM systems. These are real obstacles that take time and organizational will to clear. But they are deployment problems, not capability problems. The case for AI-assisted drawing review has been made. The next step is about execution.

And when it comes to execution, AI tools can be most helpful when they help break the inertia of simply getting started. In the case of design review, that’s a first-pass check. CoLab’s AutoReview runs before the human review, checking drawings against your organization’s specific standards and guidelines. It flags missing tolerances, ambiguous notes, and GD&T non-compliance directly on the file. Engineers pick up where AutoReview leaves off, validate the findings, and keep the review moving. Over time, the feedback captured across those reviews — the issues raised, the rationale, the resolutions — builds the foundation that makes the next tier of automation possible.

The operational barriers are real, but they are surmountable. Meanwhile, AI’s capabilities for engineering are well established, and other teams are taking action. The question is no longer whether AI belongs in your design review process. It’s how much longer your team can afford to run reviews the old way.

Want the full data behind AI’s impact on design review? Read our latest survey report.

This article is part of the CoLab Research Reports series, where we publish findings from both engineering leader surveys and aggregated, anonymized CoLab data.

The Near-Term Impact of AI on Engineering