.png)

45% of design standards and guidelines are not documented, up to date or consistently referenced in design reviews

45% of design standards and guidelines are not documented, up to date or consistently referenced in design reviews

Key Takeaways

.svg)

Engineering leaders agree that nearly half (45%) of their company’s design standards and guidelines are not 1) documented, 2) up to date, and 3) consistently referenced in design reviews.

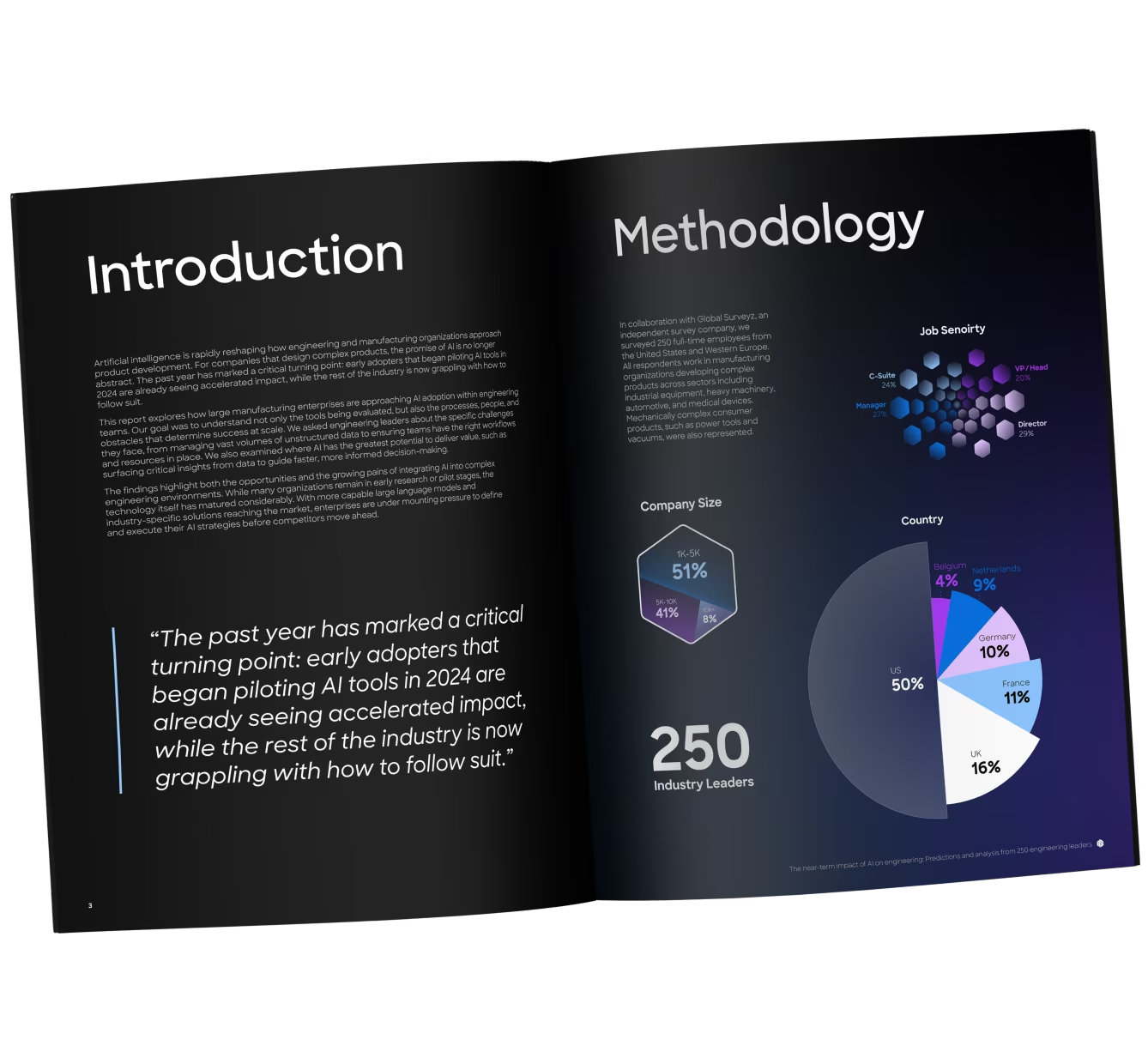

This finding came from a recent survey of 250 engineering leaders at manufacturing companies with 1,000 or more employees. Respondents ranged from Managers to Directors and VPs of Engineering, in industries like automotive, consumer hardware, heavy machinery, medical device and industrial equipment.

Meaning, this isn’t a problem relegated to a few specialized teams or industries. It’s an industry-wide trend.

Even more telling is the fact that 95% of this same group say it’s important (35%) or critically important (61%) that their engineering teams follow design standards and guidelines.

The scale of this importance can’t be understated:

- Critically important: if design standards are not followed, it creates safety and regulatory risk or we could lose a customer

- Important: failure to follow design standards creates significant risk of missing our cost, quality, or product performance targets

What’s the disconnect between the importance of design standards and their actual application?

We have a general consensus that design standards are so important that a failure to follow them would risk missing cost, quality or product performance targets; or, worse, creating unnecessary regulatory risk or even losing a customer.

But, then nearly half these design standards aren’t referenced in design reviews. And from talking with engineering leaders in heavily regulated industries, even when design standards are used consistently, the manual effort is so great that it affects time-to-market.

Which all begs the question: Why don’t engineering teams apply design standards effectively today, and how can you fix it?

Throughout this article, we’ll dive into:

- The reason teams don’t apply design standards effectively

- The risks of leaving this problem unsolved

- How to use design standards more effectively

- Where AI can help, now and in the near future

Let’s dig in.

Engineering teams can’t apply design standards effectively due to knowledge silos

Before we discuss what knowledge silos are and why they cause engineering teams to apply design standards ineffectively, it’s important to start with the everyday problems engineering teams experience as a result of poorly applying design standards and guidelines.

There’s three problems masquerading as causes, but are actually symptoms:

- For manufacturing companies without strict regulations, applying design standards is inconsistent. So, errors slip through reviews and into late-stage development.

- For those with strict regulations and compliance, applying design standards is nonnegotiable but the process requires intense human intervention at nearly every step. And so applying design standards is slow and arduous.

- The definitions are fuzzy. A standard, in some industries, might be a rule, like ASME Y14.5 for GD&T. A guideline might be an internal standard passed along from lessons learned on past programs. Something like: All threaded holes in aluminum castings must use coarse threads (M8 × 1.25) with a minimum thread engagement of 1.5× diameter. And no one knows which is which or whether one is more important than the other.

So, why do these problems exist? One answer trumps the rest: knowledge silos.

The moment you need to reference a design standard or guideline, you don’t know where to find it – let alone check your design against it. Or, worse, you’re a relatively new hire and you don’t even know your company has unique design guidelines.

This happens because that information is buried in:

- Some OneDrive Inception folder (a folder within a folder within a folder) with an obscure naming convention.

- A spreadsheet with hundreds of line items

- A file cabinet circa 1993 amid hundreds of other paper documents

- Someone’s head

- PLM, with strict licensing and access controls

These are knowledge silos. And they’re slowly killing design review quality and speed across nearly every manufacturing industry. It’s also important to note that engineering teams know this. They know these knowledge silos exist, but few have traced these silos back to the massive downstream problems they cause. And this is the key to fixing the larger issue.

One design standard failure away from a product recall

The natural next question is: if design standards and guidelines are so important that nearly every engineering leader believes their application is critical to a product’s success, why hasn’t it been a bigger issue?

The simplest answer is: It wasn’t until it was.

20 years ago, products were simpler, teams were smaller, and everyone worked in the same office. Now, products are more complex, and teams are larger, more specialized, and globally dispersed.

Yet, the tools and processes engineering teams use to make those products and collaborate with those specialized, globally dispersed teams haven’t changed.

And now, teams are feeling the effects:

- Product launch delays

- Unnecessary rework as an accepted reality

- High scrap rates in prototyping or production

- Warranty claims

- Lower gross margins

- In worst-case scenarios: product recalls

As these problems start to affect the bottom-line, company leadership is scrutinizing processes earlier in the product development process: namely, engineering. And engineers know what the problems are. The tools and systems from 20 years ago don’t work today. These legacy tools and systems cause slow design cycles, errors to slip through the cracks, product launch delays and the myriad issues that follow. Design standards and guidelines are one part of this slippery slope.

So, of course, every engineering leader is going to agree that design standards and guidelines are critical to product success. They always were. But only now that the magnifying glass is brought to engineering processes are engineering leaders realizing how inconsistently and slowly engineers apply these standards and guidelines during design reviews.

Real-world design standard failures

1. Unnecessary and Costly Rework – Boeing 787 Wiring Harnesses

- Failure: Lack of enforced CAD modeling and wiring design standards across global teams. Different sites used incompatible systems, producing wiring that didn’t align at integration.

- Impact: Thousands of harnesses had to be reworked manually on the assembly line. Boeing absorbed ~$1 billion in rework and abnormal production costs.

2. High Scrap – Medical Device Components Study

- Failure: Inconsistent application of manufacturability guidelines (minimum wall thicknesses, thread designs) led to molding defects.

- Impact: A medical device manufacturer reported scrap rates of 70,000 ppm, costing hundreds of thousands annually. Once DFM guidelines were applied systematically, scrap fell by 85%.

3. RMAs / Warranty Claims – Apple iPhone 4 “Antennagate”

- Failure: Ignoring internal RF performance guidelines in pursuit of sleek industrial design. Holding the phone a certain way blocked antenna performance.

- Impact: Millions of RMAs and warranty claims; Apple offered free bumper cases or cash payouts, and faced a class-action lawsuit.

4. Lower Gross Margins – Tesla Model 3 Complexity

- Failure: Over-engineered body structure with excessive welds and fasteners, deviating from DFM guidelines favoring simplified part consolidation.

- Impact: Slower ramp-up, higher production costs, and compressed margins until re-engineering reduced weld count and assembly complexity.

5. Delayed Launches – Airbus A380 Wiring

- Failure: Inconsistent application of CAD and data exchange standards across design centers. German and French teams used different CATIA versions, producing wiring harnesses too short to install.

- Impact: Launch delayed by nearly two years, costing Airbus ~$6 billion and undermining profitability.

6. Product Recalls – Takata Airbags

- Failure: Failure to consistently apply safety and materials guidelines; ammonium nitrate inflators were used without stabilizing agents.

- Impact: Inflators ruptured explosively, leading to the largest automotive recall in history (100M+ units), billions in losses, and Takata’s bankruptcy.

These are extreme examples. But we used them for a reason. Because companies tie standards and guidelines errors in design to downstream problems only when it’s too late. The forward-thinking manufacturing companies solve for this issue now, before it becomes a massive problem. And they do this by investing in how they work together and make design decisions, not post-crisis decisions.

Applying design standards to every review: A workflow

The ideal workflow for applying standards and guidelines to every design review would look something like:

- Run the drawing(s) through an AI Peer Checker. This Peer Checker would be trained on your company’s design standards and guidelines, lessons learned and past reviews. It would highlight eros on the drawing, like inconsistencies in technical data, ambiguous notes, non-conformance to standards and guidelines, conflicts with lessons leanred and DFX optimizations.

- An engineer reviews the AI’s suggestions, adding commentary where necessary. This commentary would train the model to get more accurate over time. It would also help the model pick-up on the nuances of a company’s design guidelines compared to typical standards.

- Share the drawing with other key stakeholders. Anyone should be able to review the drawing in the same interface. They should be able to use the AI Peer Checker, reply to its comments and tag in other subject matter experts. This is especially critical for design standards and guidelines because one engineer or group is rarely responsible for the compliance of every design guideline. QA, Manufacturing, Tooling and even Supplier Quality should be able to access the review and ensure conformance to design standards and guidelines.

- Build a design standards knowledge model as a byproduct of doing the review. When an engineer or SME accepts the AI commentary or adds feedback of their own, this should get tracked and stored in a database to feed the system. The system can learn from this review to improve the next one. This way a company’s design standards and guidelines are no longer static resources wasting away in a file cabinet, but dynamic rules that evolve over time.

This workflow solves for the major issues that prevent engineering teams from applying design standards 1) consistently and 2) quickly. Having design standards and guidelines available in a single place and in the context of the model or drawing solves the issues for:

- Less regulated industries, like industrial machinery, who suffer from inconsistently applied design standards resulting in frequent errors. Attaching the relevant design standards to every review ensures consistency.

- Heavily regulated industries, like medical device and aerospace, who suffer from slow, arduous manual intervention when applying design standards. Having the design standards readily available for every model or drawing review significantly speeds the process.

This system exists today and it’s called CoLab.

Where can AI make design standards more effective?

Today’s mechanical engineering AI tools already promise faster, more effective workflows for engineers – saving one of the most valuable resources for teams today: time. For applying design standards and guidelines, AI tools can make many of the use cases here more effective today; and will make most, if not all, more effective in the very near future.

Drawing reviews

Today, engineers manually review 20,000–50,000+ drawings annually: flipping through PDFs, relying on memory, or (occasionally) applying institutional knowledge.

AI can make this process more effective by:

- Automatically checking drawings and models for compliance with GD&T standards, tolerances, materials specs and DFX best practices.

- Surfacing errors instantly: i.e., “Hole callout missing tolerance,” or “Wall thickness is greater than company minimum.”

- Ensuring every review applies the right rules at the right time.

This tool exists today in CoLab’s AutoReview.

Design creation (inside CAD)

Standards are often applied after CAD modeling, at release or review. By then, errors require some form of rework.

AI can make this process more effective by:

- Embedding a “real-time peer checker” inside CAD tools.

- Flagging non-compliant features while the engineer is modeling (e.g., “This fillet radius is below the minimum allowed for aluminum casting”).

- Provides context and corrective suggestions based on internal guidelines. Like, “All plastic housings must maintain a minimum wall thickness of 2.5mm for ABS, with no unsupported spans exceeding 50mm, to avoid sink marks and warpage.”

This tool doesn’t yet exist. But as AI evolves, we expect this capability to exist in the next 1-2 years.

Updating Standards Across Teams

Even if standards are updated centrally, engineers may use outdated PDFs or checklists for months (or years).

AI can make this process more effective by:

- Centralizing standards into a single digital ruleset.

- Enforcing guidelines updates automatically, at the moment of change. So, there’s no lag time or manual retraining required.

- Giving engineers live checks as they work, based on the most current rules.

This tool doesn’t quite exist today, though companies like NVIDIA and Palantir claim to offer a tangential way to do this with deep data integrations. However, as AI evolves at an exponential rate, we expect a solution for this in the next 1-2 years.

Supplier and Cross-Team Alignment

External suppliers and remote teams often interpret or apply standards differently. Or worse, your team never gives them those standards and so they aren’t applied in the first place. This adds an extra step for your team to ensure your suppliers abide by internal design guidelines.

AI can make this process more effective by:

- Extending the same internal “AI peer checker” to suppliers, so they can run checks before submitting designs.

- Reducing back-and-forth, misinterpretation, and rejected parts.

- Ensuring a shared interpretation of the same rules.

This tool exists today in CoLab’s AutoReview.

If this article convinced you of anything, we hope it’s this: If you’re not already exploring AI tools specific to mechanical engineering and manufacturing, you’re behind. But not too late. Starting with a simple use case, like applying design standards and guidelines to drawing reviews is a great start. And, you can get started today.

This article is part of the CoLab Research Reports series, where we publish findings from both engineering leader surveys and aggregated, anonymized CoLab data.

The Near-Term Impact of AI on Engineering